What does AI mastering mean for artists, engineers, and music?

AI Mastering services are reaching new heights of speed and quality, ushering in a new era of AI music production. We speak to some of the industry’s leaders to learn more

Image: Getty / westend61

Before there were vocal clones, text prompt song generation and copy and paste musical styles, there was AI mastering. Way back in the halcyon days of 2014, mastering became the music industry’s canary in the coal mine when LANDR launched its automated mastering service.

- READ MORE: Grammys outline new rules for AI use

There was panic in the streets, industries collapsed, racks of outboard gear were set ablaze, and gangs of feral mastering engineers roamed the streets looking for revenge against their new AI overlords. Just kidding. That initial launch was actually more of a whimper than a bang; there was some grumbling, some hand wringing, and then everyone quickly got back to business as usual. However, AI anxiety has now returned bigger than ever and this time, maybe, it’s justified.

Mastering has long been referred to as the ‘dark arts’ of music production – but on paper it all sounds quite simple. After a song has been recorded and mixed, a mastering engineer performs a series of relatively small sonic tweaks to finalise the track for release: a little EQ, some stereo imaging, the right amount of compression, et voilà! You have a song that’s ready to stream and will, ideally, sound slick on anything from cheap earbuds to high-end studio monitors.

Of course, achieving professional quality results is far harder than it sounds, and certain mastering engineers have achieved near-legendary status for their ability to elevate a track to perfection. For this very reason, mastering has always had an air of exclusivity around it – or at least it did.

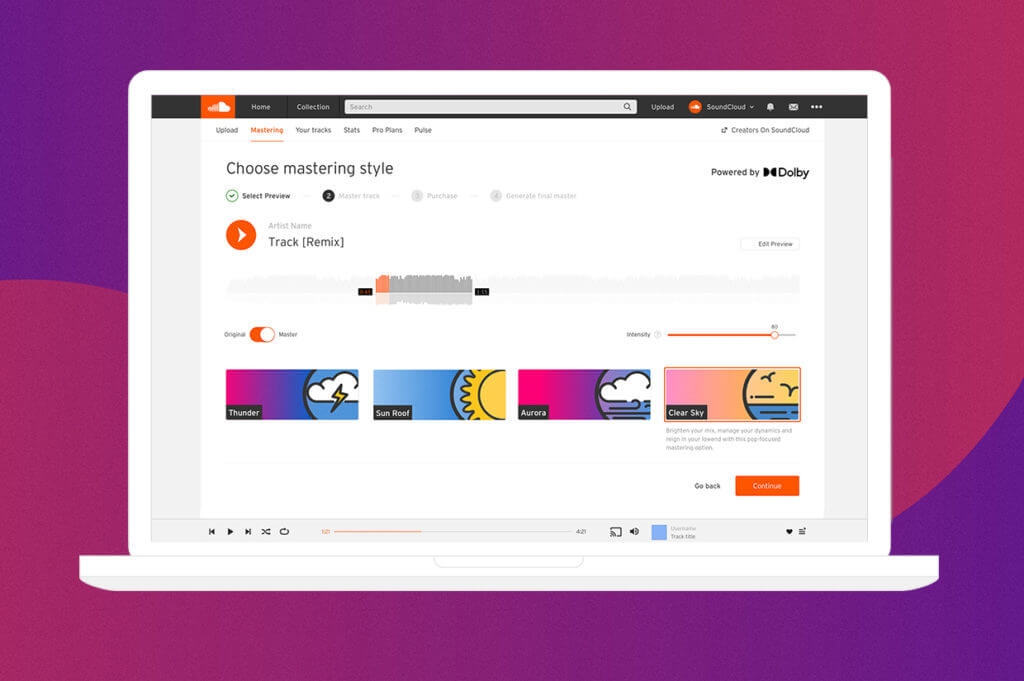

In case you haven’t heard, the power of AI technology has been growing by leaps and bounds, and it’s now possible to achieve results with automated mastering services that eclipse what was possible even a few years ago. The field has also grown much more crowded, with Soundcloud, Cloudbounce, Roex, and more joining LANDR in offering affordable mastering with increasing levels of quality.

One of the most interesting new kids on the block is Masterchannel, a Norway-based company launched in 2022, that uses an approach known as ‘reinforcement learning’ to achieve its results.

“Many of our competitors have built their tech on huge data sets with millions of songs,” says company co-founder, Christian Ringstad Shultz. “When you upload a track, they find the most relevant song from the dataset, put on those presets, and then tweak it afterwards.”

By contrast, Masterchannel says they worked with thousands of human engineers to achieve ‘benchmark results’ – i.e, an objective standard against which the AI’s output can be measured. When a track is uploaded, Schultz says, the algorithm works in a similar manner to a mastering engineer; trying out different approaches and then getting a positive or negative feedback on the decisions made. “Rather than working with one mastering engineer, with this approach you get thousands of engineers in one automatic system.”

One of the chief benefits is a system that is genre-agnostic. “There are new genres popping up every week,” Schultz points out. “Normally, you’d have to curate a whole new data set and tailor it for that sound. With Masterchannel, if there’s a new genre, or if we see some errors happening in the output, we can just go back and tweak some of the benchmarks. It makes everything much more flexible.”

While reinforcement learning is at the heart of how Masterchannel works, Schultz says that the company does also use a general dataset of songs for deep learning, and that the two approaches can be complimentary. “We can use reinforcement learning and add a deep learning model on top of that,” Schultz says. “That can have a lot of positive effects by bringing the costs down and accelerating the process even more.

While AI companies are experimenting with different technological approaches, customers are also busy putting these services to an increasingly broad range of uses. “It’s really fascinating to see how people use the stuff that you build – as opposed to the way you thought they’d use it,” remarks Daniel Rowland, LANDR’s head of strategy and himself an accomplished audio engineer. “For example, tons of people use LANDR for mix referencing, or on individual music stems like drums or synths, and also on sound effects for films.”

The primary user base for AI mastering has also shifted from bedroom producers and early career artists to people working across the entire spectrum of the music industry. “We have well-known composers who use LANDR just because they have to iterate through cues and revisions so fast,” Rowland says. Similarly, while Masterchannel originally launched as a direct to artist service, Schultz says there are now a number of established artists, producers, and even mastering engineers who make use of it. “It’s the full spectrum,” he says. We see it used for prototyping, as a kind of AI co-pilot during production, but also for final releases. We even see interest from engineers who want to ‘clone’ their production style in a similar way to what we’ve seen with Drake.”

Perhaps one of the biggest changes, with the most long-term impact, is the use of AI mastering services by some of the industry’s largest customers – movie and TV studios, game developers, and record companies. “It’s already happening,” says Schultz. “We are doing tests right now with some of the leading companies here in Norway. For them, it’s about the volume of material and how quickly it can get done – and this allows them to cut costs.”

Which brings us back to those rampaging gangs of newly redundant mastering engineers. We’re not looking at some sort of overnight extinction-level event, but change is clearly coming. As the company that kicked off the whole conversation, LANDR has in fact made significant moves to drive work toward human engineers rather than siphon away business. “We have dozens of mastering engineers on the LANDR website that you can hire,” Rowland emphasises. “We’re very open about not wanting to replace mastering engineers, we just want people to find what works best for their project, within their budget – AI or otherwise.”

Schultz’s view of the issue is a little more frank. “I think for the people that can afford them, there will still be a need for mastering engineers. But, to a large degree, the engineers who aren’t that qualified or don’t really know what they’re doing might get fewer jobs.”

That may be a bitter pill to swallow for some, but Schultz’ logic makes sense. Sub-par skills will certainly struggle to compete against consistent (and affordable) mastering, while studio savants will probably still retain their niche in the upper echelons of the industry.

There’s one problem with this scenario: highly-skilled mastering engineers don’t arrive fully formed in the birthing suite. Everyone sucks at the start; everyone needs practice and experience to achieve the skills of a Bob Ludwig or a Bernie Grundman. How will the next generation of engineers develop those skills in an environment where only the cream of the crop get paid? It’s an open question.

However, all of this assumes that there will even be future generations of mastering engineers. “I believe that a lot of what we call mastering might diminish over time,” Schultz speculates. If you look at the new generation coming up; they don’t necessarily know or care about the term ‘mastering’, it’s just about having a professional-sounding track. So, I think a lot of these production steps will merge into one.”

The possibility that mastering as a separate step in the production process might simply cease to be, is not all that far-fetched. For Masterchannel, Schultz says the ultimate goal is to render the process ever more seamless and simplified: “In the long term, we’re building our tech to be applicable to every domain where audio is used: regardless of whether you’re posting a video on YouTube, making a voice over for a game in Unity, or creating music to be released on Spotify, you should have a button that automatically detects what kind of audio it is and optimises it.”

It’s a striking future vision that holds some undeniable positives. As Rowland points out: “Labels are mastering and releasing content that previously never would have seen the light of day due to the prohibitive cost of mastering.” More musical diversity can only be a good thing, and giving non-label artists affordable mastering options is similarly beneficial. There is also more audio being produced, for a wider range of mediums than ever before. Put simply – there has to be an industrial-scale solution for this ever-increasing flood of content.

At the same time as we consider these benefits, it’s worth stepping back and considering what the purpose of musical mastering actually is.

By and large, a well-mastered track is intended to meet the ‘industry standard’ – in terms of loudness, EQ, stereo imaging, and so on. However, the industry standard has changed significantly from decade to decade. A song mastered in the 90s or early 2000’s sounds noticeably different from one mastered in 2020 – why? Because someone broke the rules. An engineer did something different from the standard, others heard it, liked it, copied it, and a new industry standard was born. Alongside genres, styles, and instruments, mastering is core to how the sound of popular music evolves.

The question is: can we count on ‘one-click’ AI mastering to creatively break the rules? Will a song mastered in the year 2030 sound noticeably different from one mastered in 2060? Let’s hope a little creative chaos can be coded in.