Ghost in the drum machine: How creative AI is kicking off a paradigm shift in music

Meet the artists and developers leading the next evolution of songwriting.

Image: Getty Images

Nothing captures the popular imagination quite like artificial intelligence. As far back as the 19th century, soothsayers have been promising and warning against it in equal measure. While we have yet to achieve a post-scarcity utopia or descend into a robot-ruled wasteland, year upon year, little by little, many of those predictions have jumped from the pages of sci-fi novels and into news headlines as ever-increasing computing power turns future fantasies into tangible reality.

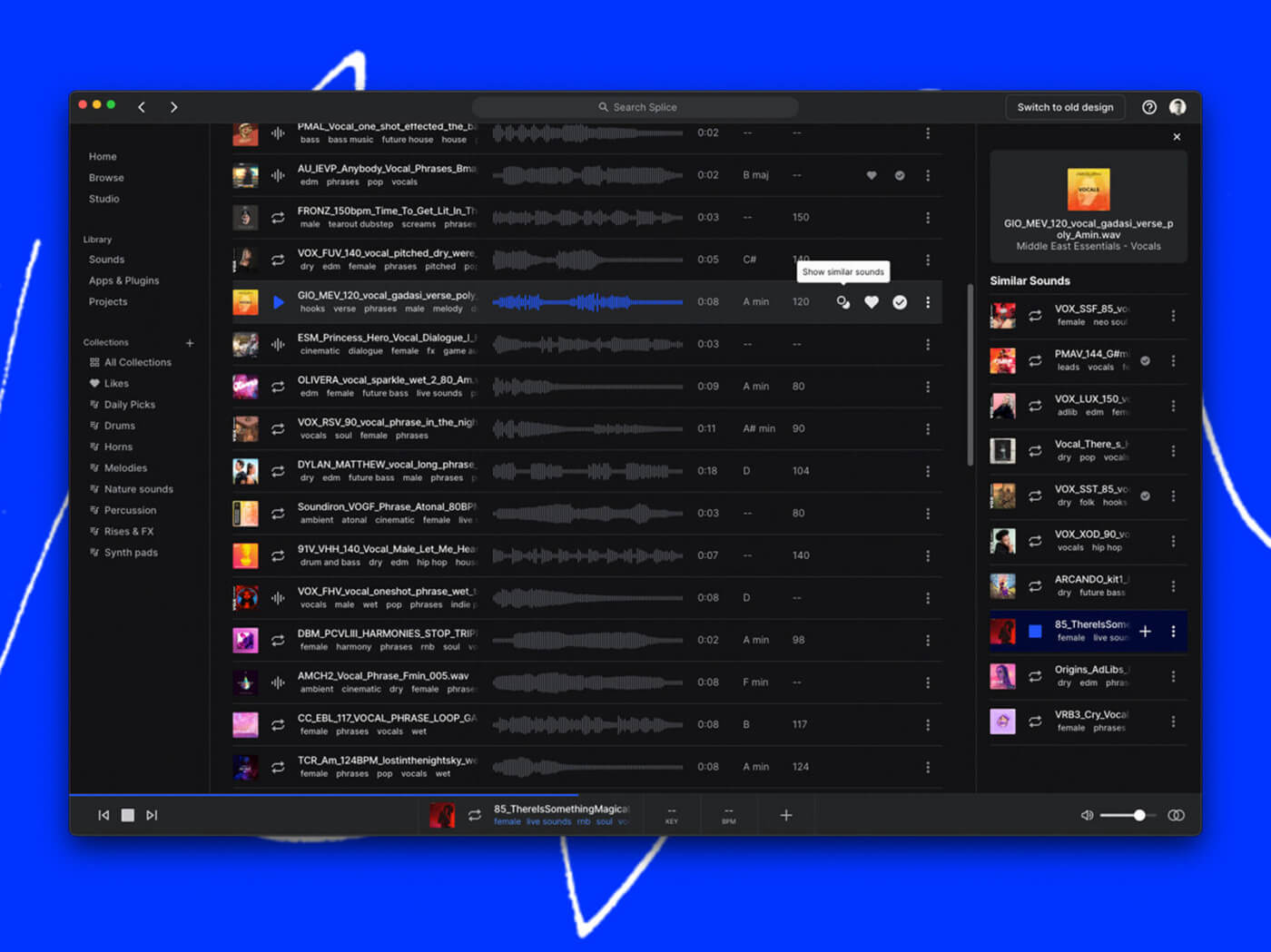

From law enforcement to medicine and visual arts to weaponry, the real-world impacts of AI are already being felt. It’s the same with music. Tech’s best and brightest are hard at work trying to streamline the songwriting process or replace it altogether: Splice’s Similar Sounds uses AI to scan thousands of samples before offering the best kick to complement your snare; Orb’s Producer Suite generates rhythms, melodies and chord progressions to help you get started on a track; and services like Amper need only a few keywords to create fully realised background music.

So, are composers and songwriters staring into the void of their own obsolescence? There’s certainly some justified anxiety about what these tools will mean for the industry at large, however, for many artists, AI is not a threat but an opportunity – a new instrument that could usher in a musical renaissance comparable to the advent of 1980s sampling culture.

Here we’re talking to some of the artists and developers who are harnessing the creative potential of AI to make new sounds, new musical forms, and new ways of working.

Of course, AI music is not a monolith. Various approaches are being taken to reach a diverse set of goals, but one thing many of the most innovative projects have in common is their use of artificial neural networks – computer systems whose architecture is inspired by the human brain. These in turn power deep learning algorithms which pass an input, in this case audio, through sequential network layers, progressively extracting more information with each step.

If all that sounds both very nerdy and decidedly un-rock ’n’ roll, let me introduce you to DADABOTS. Since 2017, duo Zack Zukowski and CJ Carr have been training neural networks on a heady mix of math rock, black metal, and punk in order to generate inhuman riffs, screams that no lungs could muster, and endless rhythmic complexity. DADABOTS’ approach to AI music is both labour intensive and creatively demanding: speaking of their recent entry to the annual AI Song Contest, the pair told us that they “treated the AI models like they were session musicians, directed by our imagination”. Each musical idea required selecting, training and ‘tuning’ a neural network to consistently generate musical textures in a fitting style. Even then, the duo needed to “dig through hundreds of variations to find the ones that fit best” before arranging the parts in a traditional DAW.

The resulting track, Nuns in a Moshpit, is a tour de force of AI production techniques. “We used eight different families of neural nets, some of which is our new research from the past year,” say the duo. “Valuable PhD and R&D hours were spent building totally new concepts for AI models, just to generate single 5 second riffs in this song that only appear once.”

Then there are artists like Robin Sloan and Jesse Solomon Clark, whose musical project, The Cotton Modules, blends bleeding-edge tech with decidedly lo-fi working habits to achieve their ambient and eerily evocative debut album, Shadow Planet. Using OpenAI’s Jukebox, which draws from a repertoire of more than a million songs to generate music and vocals, the pair began trading song ideas back and forth via cassette tape during the pandemic.

“I started by creating short musical ‘seeds’,” says Clark. “These can be anything – a harmonic progression, an arpeggio, a drone, melody.” Those initial ideas were passed to Sloan, who fed them into the AI. “The generation process is super-slow,” Sloan says. “There’s a lot of backtracking, deleting, re-doing. It’s pretty annoying, honestly, but along the way, the AI is producing these weird, wonderful, totally unpredictable melodies and voices.”

Far from atrophying their creativity or cheapening the songwriting process, the pair view AI as “the first of a new kind of synthesizer,” and like any instrument, it needs to be learnt, mastered, and played to achieve inspiring results. “I actually perceive what comes out of the AI as profound material,” says Clark. “To me it’s millions of ghosts of humanity, singing at once. This is not ‘the computer’ making music – the AI is based on a million songs, thousands of hours of recorded music, hard-working talented human beings singing their hearts out. To compose music alongside a small distillation of that vast ocean of spirit is awesome, literally.”

Underpinning the work of artists are the toolmakers themselves – the developers building the foundations of AI music. One of the key names in this space is Yotam Mann, a New York-based musician, instrument builder, and creative coder who’s been involved with many of the most exciting AI music projects of the past few years. Having previously worked at Google Creative Lab, where he contributed to deliciously idiosyncratic instruments such as The Infinite Drum Machine and NSynth, in 2019 Mann began kicking around ideas with fellow developer and long-time member of indie-electronic band Plus/Minus, Chris Deaner.

“A lot of people were making really amazing algorithms and new techniques and writing research papers,” says Mann. “But they would ultimately exist as a command line interface to a Python module. No-one was building for musicians.”

Seeing this gap between academic research and practical music making, Mann and Deaner founded Never Before Heard Sounds in 2020 with the goal of making the technology accessible for average musicians, as well as musically expressive. Though the company has yet to launch a publicly available product, you might have heard their algorithms at work in Holly Herndon’s groundbreaking Holly+ project. Using an AI model trained on Herndon’s voice, the instrument allows anyone to input polyphonic audio files and have them transformed into an eerie simulacrum of the singer’s voice. They’ve even been dabbling with real-time versions of the same process.

This seaming magic trick is achieved using what Mann and Deaner call ‘Audio Style Transfer’, which works by training an AI model on a single class of instrument. “You feed it enough guitar and it learns to reproduce a guitar – and then all it can reproduce is guitar,” says Mann. “Any sound you put into it is going to come out as guitar. That’s kind of the crux of how we create a model like this.”

It’s a creative paradigm that opens tantalising possibilities for music makers. Much as MIDI-based producers have grown accustomed to laying down a beat or melody and then tweaking parameters, flipping through presets, or swapping to completely different instruments, Audio Style Transfer brings that same working process to sound recordings. A few sung lines can become a guitar solo, and a guitar solo can become a choir.

Given these early successes, expectations are high for the company’s first large scale project – an AI-augmented browser-based DAW that unites their audio style transfer tools with the ability to separate and sample individual stems from audio files. And ‘stem-splitting’ is just the start, as the power of AI continues to develop, the usable data that can be extracted from sound will only deepen. Mann and Deaner see a future where the art of sampling takes on new dimensions: the dynamic range of a vocal performance, the tone of a beloved guitarist, even the difficult-to-define ‘vibe’ of an iconic recording session will eventually be opened up for sampling and creative reuse.

“All these different musical features used to come as a big lump sum called ‘the sample’, but now we can start to tease all of these things apart”, says Mann.

It’s hard not to get swept up in the enthusiasm of those working at the technological edges of music, and it’s easy to forget that for every company using AI to explore new sonic territory, there is another working to automate away whole sectors of composing work. Tunes written for commercials – what is sometimes called ‘functional music’ – is often seen as the most at risk for AI replacement, but this issue isn’t just a concern for jingle writers. Spotify has faced numerous allegations that it pads out its mood-based playlists with ‘fake artists’ who don’t need to be paid royalties. As services like Amper continue to refine their algorithms, it’s not hard to imagine an expanded definition of ‘functional music’ that sees audio for relaxation, sleep, productivity, and exercise as fair game for automation.

Of course, the indefatigable march of innovation won’t be stopping anytime soon, but it would be callous to dismiss the valid concerns of music professionals out of hand. The industry has always been a precarious way to make a living, and the prospect of paid work being swallowed up by algorithms is no laughing matter – especially when most societies are a long way from offering any kind of universal basic income.

At the same time, to focus purely on possible negatives is to miss the truly awesome potential AI clearly has to support and elevate human creativity rather than supplant it. For their money, DADABOTS see AI music creation as less about a zero-sum game and more about diversification. “Songwriters and composers will still do their thing,” they say, “but AI music defines new roles.” Those roles span everything from ‘sample diggers’ who trawl an ocean of AI outputs to find the perfect sound clips, to what the pair call ‘neural net performers’ – a new genre of live music based around generative models and audio style transfer.

“Lots of people in AI music are trying to make the same kind of pop music everyone’s already heard. I hope that trend dies,” says DADABOTS’ CJ Carr. “I want to see weird and cool new genres invented, I want to unleash a renaissance where 10 new genres are invented by people every day and we get to hear them. After we make the most powerful music AI in the world, we need to give it to 4-year-olds who will wield its power – what will they make? Something never before heard.”