Demo the music tagging AI that could help you get discovered

Music AI start-up’s co-founder/CEO explains the tech.

Musiio, a music technology start-up using AI to automatically identify and tag songs, has released a free web demo, letting you try out their technology on your own music.

The company was created to find a solution to one of the greatest problems currently facing the music industry: that of music discovery.

Right now, record labels, streaming services, production music libraries and plain old listeners are struggling to make sense of the vast quantity of new music being created. Every day, for example, around 40,000 new tracks are uploaded to Spotify’s database of over 40 million songs.

To listen to one day’s worth of uploads would take a person 83 days solid. For Musiio’s AI, the same task takes only 4 hours.

The idea is that correct tagging of music, will make playlisting and music discovery easier for talent scouts, music libraries and streaming services. And ultimately, that should help great artists be heard through the noise.

Try it for yourself

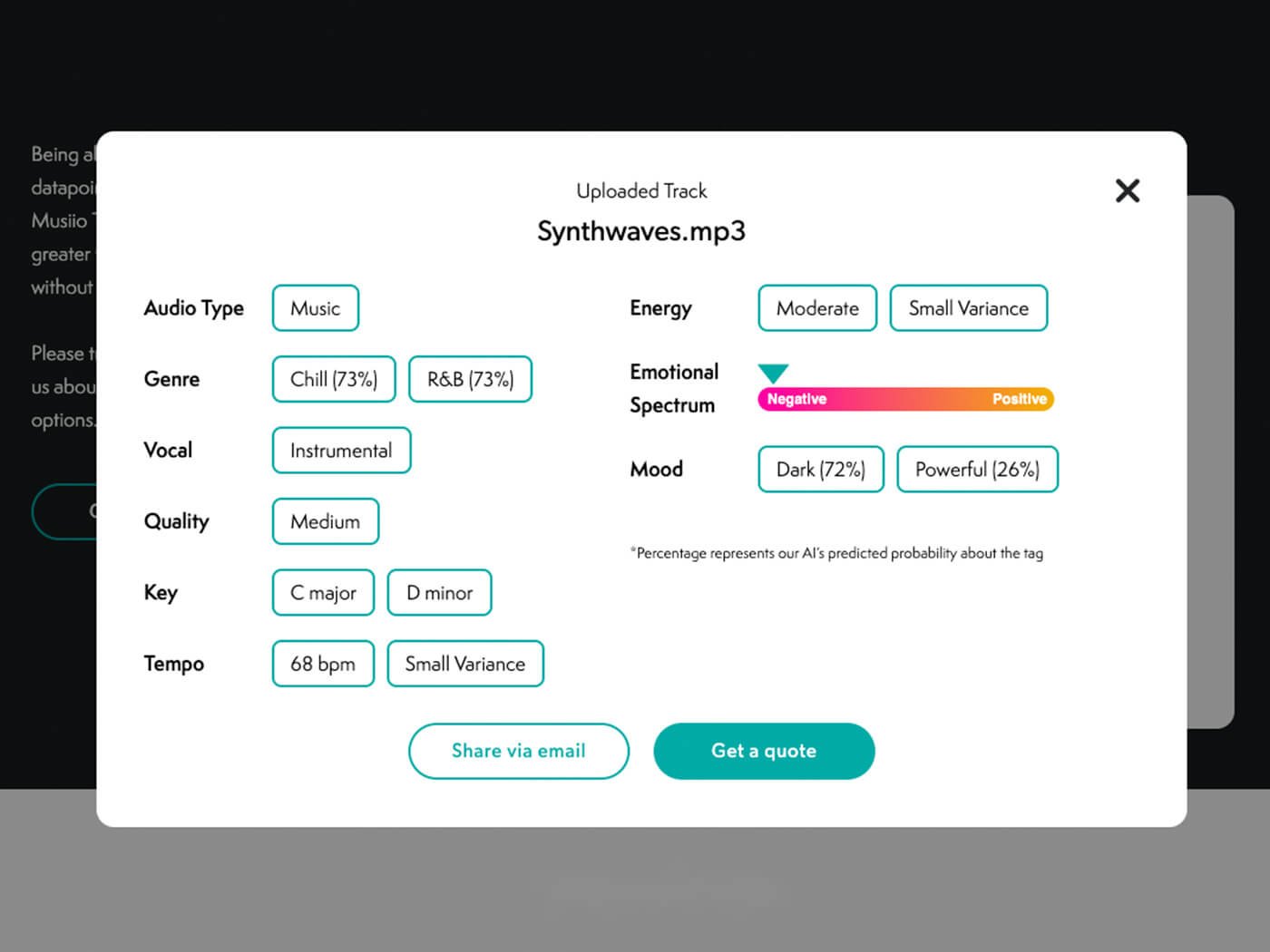

For the public web demo, the AI takes your track, transforms it for analysis by neural networks then assigns tags in eight categories including genre, mood, BPM, key, quality, energy, and where it sits on an ‘emotional spectrum’. What’s impressive here is that all of these tags are based on the audio alone, not metadata.

Musiio’s reasoning for releasing a public version is to take the ‘scare factor’ our their AI. They also want the public to know what the AI can – and can’t – do. So, give it a try.

Musiio CEO explains all

To find out how AI could benefit the music industry, we sat down with Musiio’s CEO Hazel Savage.

Who is Musiio?

Musiio is a like-minded bunch of 16 tech and music specialists working out of our HQ in Singapore, co-led by myself, a British CEO, with 14 years experience (Shazam, Universal, Pandora) and Aron Pettersson our CTO, Swedish national and AI Scientist with 17 years coding experience. Our team is made up of the best and brightest Singapore has to offer and we pride ourselves on our diversity and creativity as Singapore’s first ever VC funded music tech company.

What is AI? AI vs ML

Lots of companies are making a move into AI, and in the music industry specifically, businesses and artists alike are trying to understand the impact it will have on them. Artificial Intelligence is a buzzword that has been floating around for the past few years. On its own, it is rather a broad concept often defined in more sci-fi terms as: ‘A machine or computer that can mimic something that appears like human behaviour.’ Most companies these days are actually using the more narrow definition of this concept, a subset of the AI referred to as Machine Learning: ‘Using large amounts of data to teach a computer rote tasks, and have it be able to perform a single function, or combined set of functions in repetition, as well as or better than a human.’

“We have no lack of people actively willing to create music. It’s not a repetitive task that needs solving with AI.”

How might this technology affect artists in the future?

I always get asked how artists will be impacted by technology like this and my reply is that we hope our technology will make talent more discoverable. Currently, if you’ve made one of the 40,000 new songs a day released into the streaming ecosystem it is almost impossible to be found. By using AI to ‘listen’ to new songs and add them to discovery playlists we hope to help unearth talent that is not necessarily just based on marketing budgets. Also if you work for a sync company or a streaming service, our goal is to build tools that supercharge the curators or researchers you already have, give them a tool to create more hero playlists with more variety and leave them more time to focus on other projects that need their touch.

Why is Musiio different?

When the words Music and AI are in the same sentence, most people assume you’re actually talking about AI-written music. Here at Musiio, we’ve become renowned ‘AI-written-music’ sceptics, largely led by myself, and my experience in the music industry. Now that’s not to say that AI created music isn’t a fascinating area of academic research or that there aren’t people doing inventive things in the space, but I’m a realist when it comes to business. We know that AI or ML as a technology in any field works best when it replaces a repetitive function or something we simply cannot do otherwise. Packing boxes in a warehouse is one such example of a repetitive task; detecting instances of fraud by looking at every transaction a bank does and comparing it to every transaction ever done is a task that cannot scale manually.

In the case of music, the 72 hours of content uploaded per minute to SoundCloud or the 40k new songs a day added to Spotify tells me that we have no lack of people actively willing to create music, it’s not a repetitive task that needs solving. And, if you think about every hit song you know, all of it was created by a person, or many people together, it’s not something humans can’t or won’t do, so I question whether we need to replace it as a function with AI technology. Creating music is not so repetitive or so hard to scale that people won’t do it.

Who is the AI designed for?

We love to work with Sync companies, labels with internal databases and streaming services who need curation. If you want to get in touch just message us through the website.

What’s the purpose of the ‘Emotional Spectrum’ and ‘Mood’ tags?

Lots of the sync and playlisting partners we have are especially interested in tags. There is a school of thought that mood is a more important identifier than genre in playlisting music. Teaching the AI to identify moods is something we get asked for a lot from our customers.

What does the ‘Quality’ tag denote?

We trained our AI on a data set that contained commercial quality music at one end of the spectrum and freely available uploaded music (user generated content) on the other end, and everything in-between. The purpose of this is to teach the AI to understand the difference in recording quality, with the idea that perhaps for a playlist you might only want the top few percent in quality, but for A&R you might assume everything that high quality was recorded in an expensive studio and is likely already ‘signed’ and pre-select low or medium quality. The AI itself determines what factors to identify. We just train it what the results look like.

How might this be integrated into a streaming service like Spotify and how would it benefit listeners?

If Spotify has 40k tracks a day uploaded, they can’t all be manually listened to. Automated tagging makes the catalogue more searchable. Streaming services can actually use tags to assist in curation by adding a level of automation. That said the sync industry is really the biggest user of tags, large production music catalogues are easier to sort when tagged.

“We hope to help unearth talent that is not necessarily just based on marketing budgets.”

What’s preventing those services from doing this themselves?

I think it’s fair to say with Spotify’s acquisitions of Niland in 2017 and EchoNest in 2014 if anyone has something comparable to this tech those guys do. That said, there are a lot of services in the world who don’t have the tech, and in a competitive market, we offer a cheaper solution than hiring five AI devs who may or may not be able to replicate our results.

How accurate is it?

All of our tags have an accuracy rating between 90% and 99%. Some tags are much harder to teach to the AI than others. We generally find the more source data and the more time spent training the higher the accuracy becomes. We also build custom tags for our customers using the same technology but fitting the process to their in-house tagging system. It requires fresh training but our tech scales really well.

When one of the tags comes back with two results and a percentage for each, what does that mean?

The percentage value is ‘how confident the AI is that the result is right’ so you might tag a track and it comes back 80% Rock 40% Metal. The AI is 80% sure it’s a rock song but only 40% sure it could be a metal song. Usually, the AI is ‘seeing’ certain traits from each in a case like this. We also have the demo set to return a max of two tags for each category, but it can be customised.

Is AI a threat?

Congratulations if you made it through that whole explanation. But the explanation is important, especially for us here at Musiio who are focused on the curation of music using AI. It’s important because in our world, AI for music is not scary: it contains no sentience; it definitely isn’t a robot trying to steal your job, quite the opposite in fact. We aim to help artists be found and labels and streaming services to deliver a better, more personalised product.

You can try uploading your own music to Musiio’s AI using their free widget at musiio.com now.