What is the future of synths? Korg, Newfangled Audio and Madrona Labs share their predictions

Engineers and developers on the cutting edge of synthesis reveal what the future has in store for synthesizers.

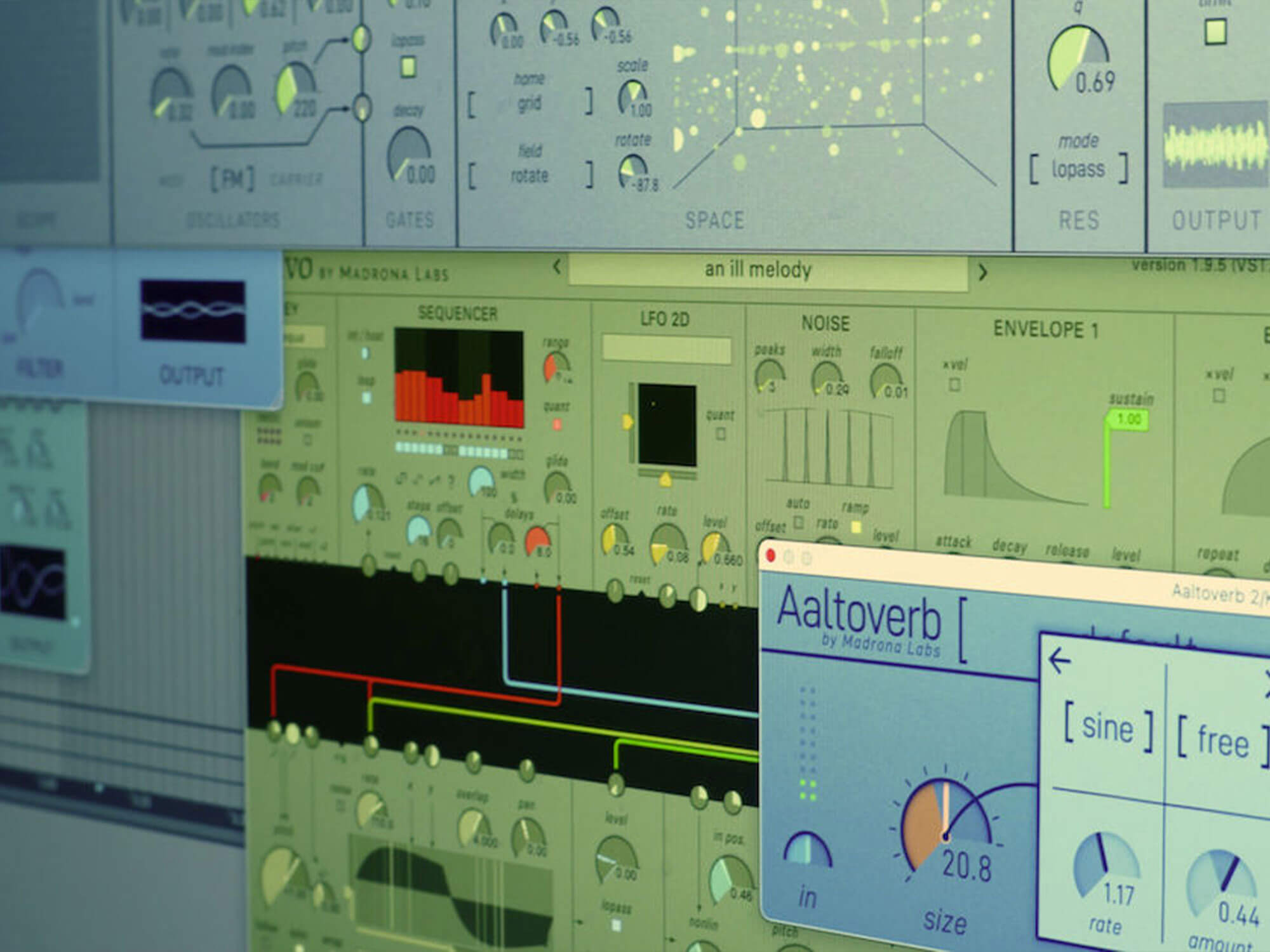

Madrona Labs Sumu. Image: Madrona Labs

Synthesizers are an incredible mixture of physical instruments and electronics. While the physical side of things doesn’t change very much – the piano has remained relatively the same for centuries now – the technology inside is like a wild rollercoaster ride; twisting and turning, with new highs around every corner. Or at least it used to feel like this. The past decade or so has seen the evolution of synthesis slow down, with modifications replacing wholesale introductions.

While there have been a few modern developments, most of the synthesizers we enjoy today are based on old ideas. Analogue has been around since the middle of the 20th century, while sampling, FM synthesis and physical modelling came out of advancements made in the 1970s. Even virtual analogue, which has played such a large part in modern synthesizers, developed out of physical modelling.

But is this just the quiet before the storm? There is surely something new, some excitingly novel form of synthesis lurking just around the corner. AI seems like a likely candidate for the Next Big Thing – but then again, who knows?

If anyone does know, it’s the engineers and developers working on them: people like Korg Berlin CEO Tatsuya Takahashi and Korg Berlin’s Lukas Hartmann; Randy Jones of Madrona Labs; and Dan Gillespie from Newfangled Audio. They’re all engaged in pushing the envelope of synthesis.

Here’s what they had to say.

What is the future of synthesis?

Randy Jones, Owner and Systems Designer, Madrona Labs

“Since computers became music machines, we can think of all sounds as being out there in the possible space of data, somewhere. And so synthesis is really about finding them. Finding them, and connecting them in meaningful ways to controls for performance.

“There are a limitless number of ways to think about sound; new metaphors that can become tools for synthesis, and I think we’ll continue to see a big kind of opening up as people use computers and new analogue/digital hybrids to explore these possibilities.

“The idea for my newest synth, Sumu, came from a very specific experience: listening to a stream in the woods and thinking about how to make a sound like that. Or ‘What if the stream could sing?’ These kinds of ideas are available to anyone willing to be still for a moment and pay attention to nature. And with computers and sensors, we can take these abstract or even poetic ideas and design usable, human-centred systems around them. That’s the future I want to help build.”

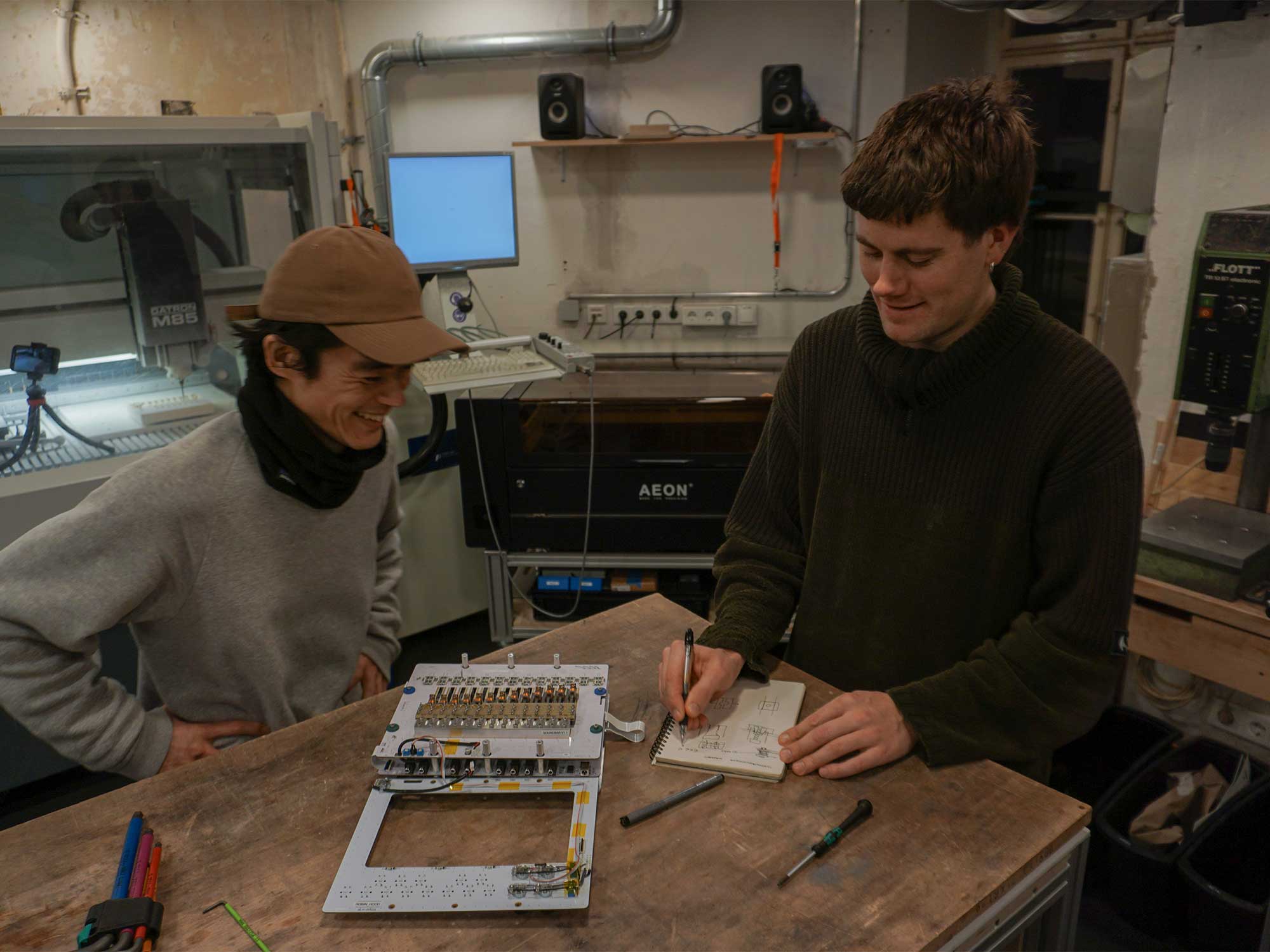

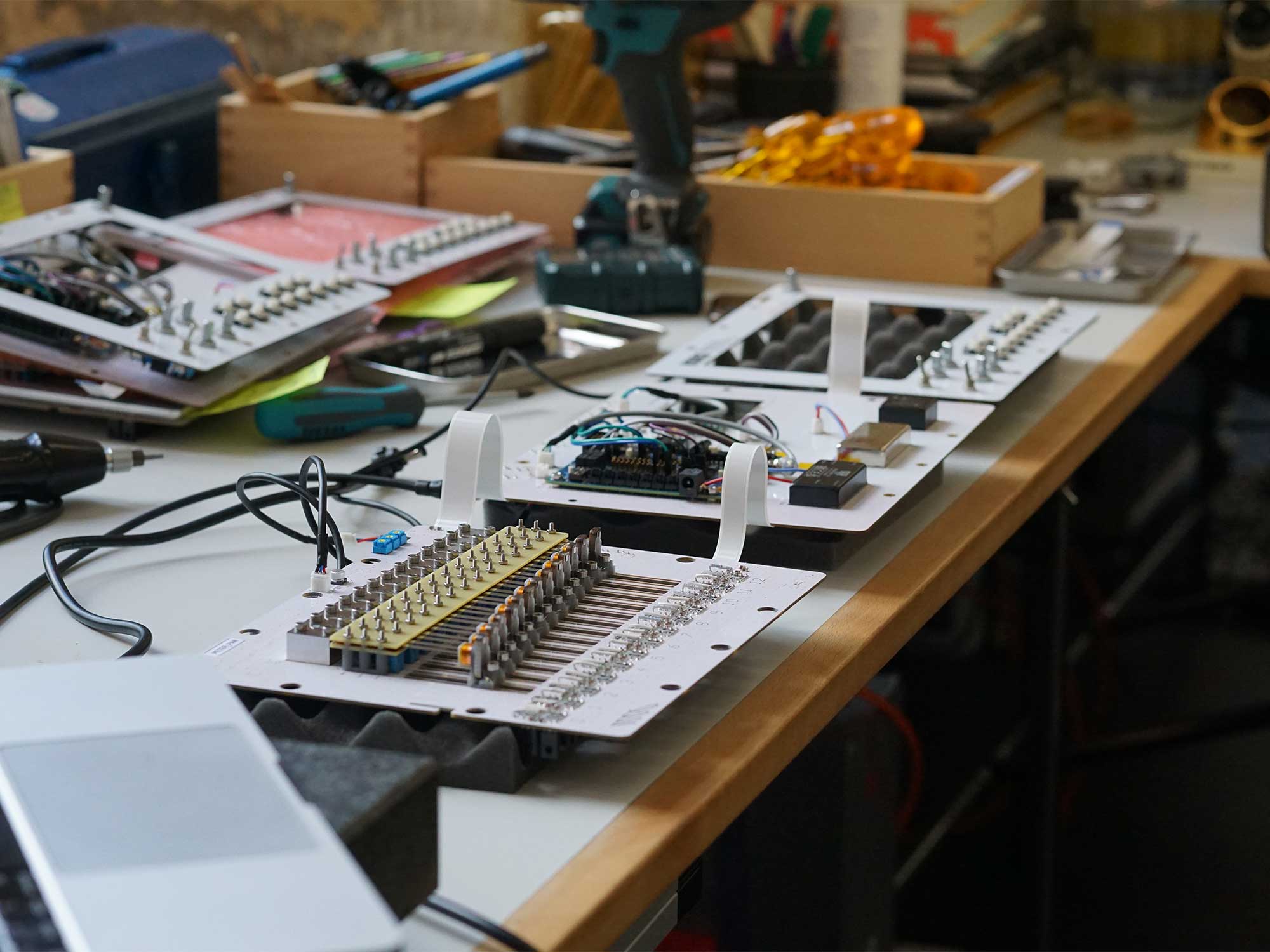

Lukas Hartmann, Hardware Developer, Korg Berlin

“I don’t know if I see any one future of synthesis. I guess it depends on how you look at it but, importantly, I don’t think you need future tech to make new and exciting sounds. I think that’s why making instruments is fun.”

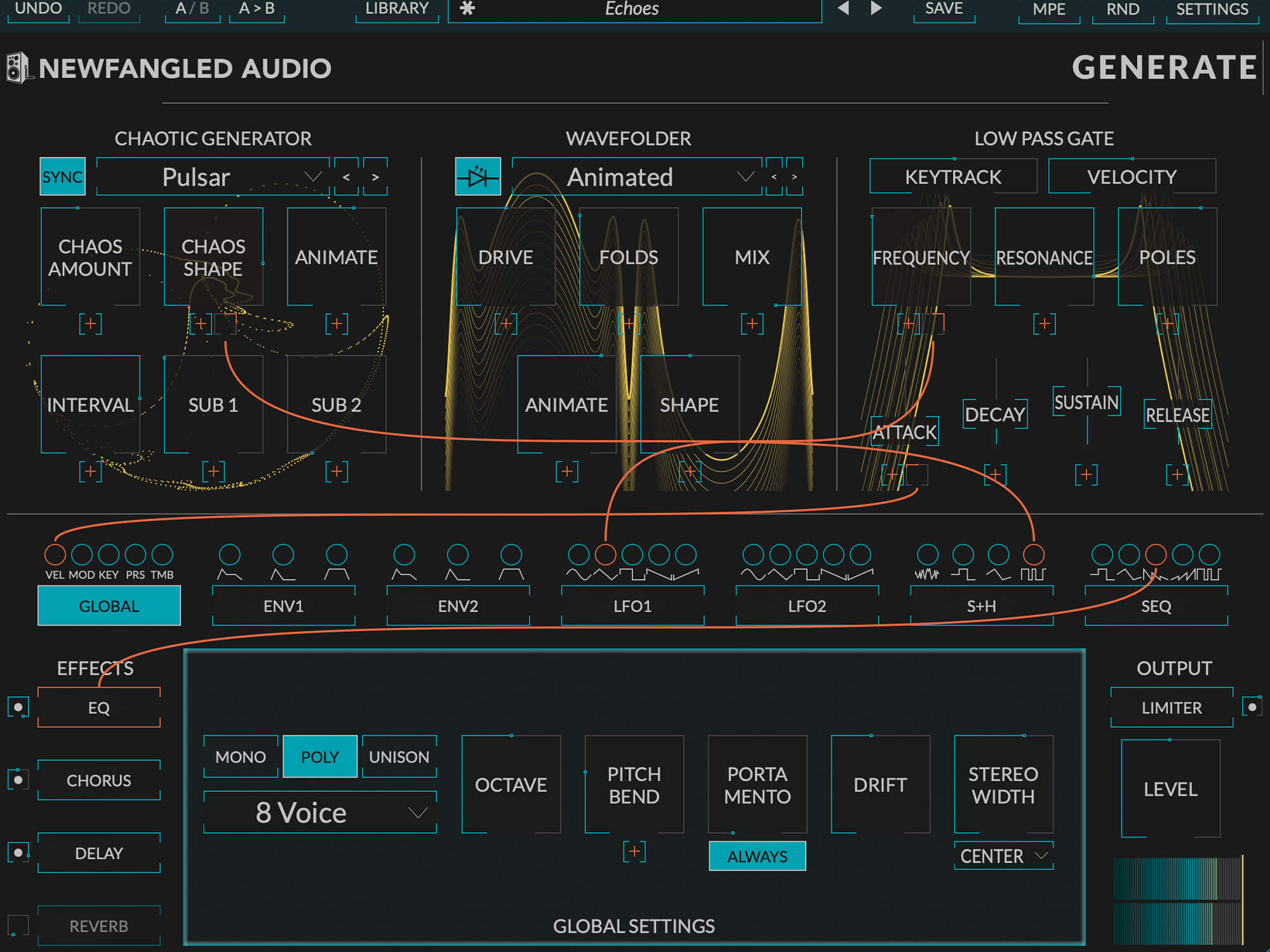

Dan Gillespie, Founder, Newfangled Audio

“I don’t think anyone knows exactly, which is fantastic. I tend to view all musical instruments as a combination of an interface (the part the human interacts with) and a synthesizer (the part that makes the sound). Both are equally important. For instance, a piano and a harp both have a synthesizer comprised of resonating strings connected to a soundboard but they sound totally different because the interface is different. This way, I don’t think of a synthesizer as something that started with the invention of electronics but something fundamental to our humanity, ever-changing, but ever the same.”

Tatsuya Takahashi, CEO, Korg Berlin

“The future is bright. I feel it in my bones that the world is shifting away from the geekery, knobs, switches and more into what helps you make music – with or without all the bells and whistles.”

Do you think AI will be a part of the future of synthesis?

Dan Gillespie

“Absolutely. It’s been a big year for AI but it’s important to remember that AI is just the word we use for computers doing things that we thought only people could do. Because of this, once we get used to computers doing these things, we stop calling it AI, now it’s just technology.

That said, there are some new AI-branded technologies that have the capability to really transform how people make music.

“(A) recent AI trend has been audio-controlled synthesis. These are processes that take audio input, extract some control data, and use it to resynthesize a new sound. AI Drake is an example of this but similar projects exist which allow your voice to control other synthesizers. This analysis/resynthesis approach is not new to AI but neural networks are powerful here. I’d guess we’ll see more projects like this in the future.”

Randy Jones

“Sure, but let’s call it what it is: statistics – or if you like, machine learning. ‘Artificial Intelligence’ is a marketing term that was effective at getting military funding when it was first invented and more recently at boosting the share price of some startups. It obscures the truly neat new things that can be done with machine learning techniques.

“Synplant 2 is a great example of how to apply machine learning to make something cool, useful and new. What’s great about it is that Sonic Charge started with a nice synth engine and built the machine learning stuff around helping people use that. As the tools to do similar things become easier to use I’m sure we’ll see other remarkable new uses of machine learning to find sounds.”

Tatsuya Takahashi

“There will always be a place for resource-efficient content generation and AI looks to serve this sector for sure.

“As for the prospect of AI as a creative tool, I think the rebellion against it will be the more interesting driver for new music than its direct use. There is always going to be counter play to keep things interesting. Punks against the rockers. Impressionists against the academics. That kind of thing.”

Lukas Hartmann

“I absolutely think it will, and it already is. I reckon I see AI as more of an enabler – more than AI being the voice of an instrument maybe the most exciting thing about it is the instruments it can allow us to make. But let’s see!”

Have we reached the point where current synthesis methods such as analogue or FM are at their peak, with only room for small adjustments as with the guitar or piano?

Randy Jones

“Yes, some kinds of synths have definitely entered the realm of codified instruments. A few things like a Moog-y filtered mono voice or a lush stringy pad are part of the timbral vocabulary of composers now, and so probably, forever, we’ll see versions of those instruments that differentiate mainly on usability and other features.

“But there are always composers and sound designers at the cutting edge who love to incorporate new things and then, if there’s enough sustained musical activity around a weird new thing, it can become commonplace too.”

Lukas Hartmann

“Maybe? But there’s still a huge amount of people making and enjoying those. For the majority of people, a synthesizer probably isn’t defined by the technology inside it but much more by the music it allows them to make. For me, it’s more about connection and character, and guitar and piano surely excel in both of those categories. Let’s make more instruments like them!”

Dan Gillespie

“In the early 1700s, an Italian man named Bartolomeo Cristofori invented the gravicembalo col piano e forte, literally, the ‘harpsichord that can play soft and loud.’ We call this the first piano but it looked and sounded like a harpsichord. It took about 100 years and many false starts for the piano to morph into the instrument we know today. Steinway and Sons were still filing major piano patents in the 1930s.

“The point is that musical instrument development is better seen as an evolutionary process rather than a revolutionary one by which a new instrument is birthed from whole cloth. The good news is that musical instruments are probably evolving at a faster rate than ever before. In the past 20 years, wavetable synths have moved from a way to recreate acoustic sounds to their own instrument with their own unique sounds, and the cornerstone of several genres.

“Analogue modelling technology has also vastly improved over the last decade, enabling us to design ‘analogue synths’ that could never have been created in the real world. I view this as a new form of analogue design.

“Even on the interface side, MPE is a powerfully expressive protocol and the most successful way to control musical synthesis in a generation.”

Tatsuya Takahashi

“It would be amazing if synths reached the ubiquity of the guitar or piano. My job is done when someone dresses up as one of my designs at Halloween (and normal people get it). Seems we’re not there yet, so maybe we need to look outside of existing synthesis methods. We are doing that now with our acoustic synthesis research.

“But really, who cares about what the synthesis method is or whether the revisions are big or small? We’re just trying to make better instruments to make music with. End goal being a great Halloween costume, of course.”