An Introduction to Musical Terms – Learning the Language of Sound

The world of modern music production can appear totally alien to newcomers, with technical terms in regular parlance that may seem impenetrably complex. If you’re struggling with some of these concepts then Erin Barra is here to help you learn the language of sound… Learning how to read and play music is very much like […]

The world of modern music production can appear totally alien to newcomers, with technical terms in regular parlance that may seem impenetrably complex. If you’re struggling with some of these concepts then Erin Barra is here to help you learn the language of sound…

Learning how to read and play music is very much like learning how to speak another language. Staves, slurs, accidentals, dynamic markings, tempo indicators, meter markings, rests etc… There’s a whole lot of jargon that you have to deeply understand in order to become a master musician, and those fundamentals are key to professional growth.

As a person who has worked on both sides of the glass, I increasingly find that the language of music technology can be pretty challenging to initially comprehend, and in some instances put people off music. It’s scientific, technological and often one word can mean a vast number of things depending on the context (looking at you, ‘sample’!).

As an educator and lifelong learner, I have always found meaning in the use of metaphors to aid in teaching. For example, when I think about compression, I visualise a grandmother who only has a certain threshold for loud noises. When I think about FM Synthesis I think about a carnival ride that rotates in one huge circle with arms that have smaller rotations at each end.

Metaphors have always served me well when communicating concepts. In this feature we’ll break down many typical concepts of words you encounter in Synthesis, Sampling and Digital Signal Processing into easy-to-understand metaphors to enable you to grasp their meaning. And hopefully they’ll help you to remember in case you ever get stuck!

Frequency

Frequency is a word that you’ll see time and time again on almost every device you load into your DAW (particularly Live). Of course the word ‘frequency’ can refer to how often something occurs in a specified amount of time. To put it in the context of your life, think about how frequently you eat, your normal meal frequency being three meals per day.

Or how frequently your heart is beating, which typically varies between 60-180 beats per minute depending on whether you’re resting or exerting a lot of energy. In the context of music production, frequency refers to how often a waveform repeats each second and is measured in an increment of Hertz. 1 Hertz means ‘once per second’. If something is vibrating at 20 Hz it is repeating 20 times each second and produces a very low pitch.

If an oscillator is vibrating at 16,000 Hz, it’s moving a lot faster and produces a much higher pitch. So, frequency in this context not only relates to how often something occurs, but in our world it also relates to the pitch of a note.

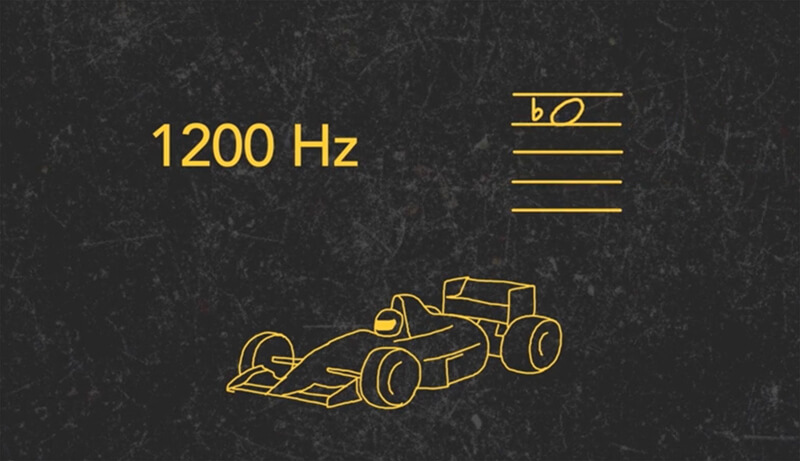

A good metaphor for frequency is a car engine. As you put your foot on the gas, the engine spins faster, and as that frequency of revolutions-per-minute increases, so does the speed of the car. As this is happening you can literally hear the resulting rise in pitch. Regular car engines are usually cruising between 2-3,000 rpm which results in an exhaust pitch somewhere between 30-50 Hz, which is the lowest octave humans can hear. Formula 1 cars rotate considerably faster, resulting in a pitch around 1,200 Hz which is around an Eb6.

To reiterate, ‘frequency’ is a measure of how fast a waveform vibrates, which is directly related to its subsequent pitch. Both of these ideas are important to keep in mind as you adjust the frequency parameter on your hardware or software.

Amplitude

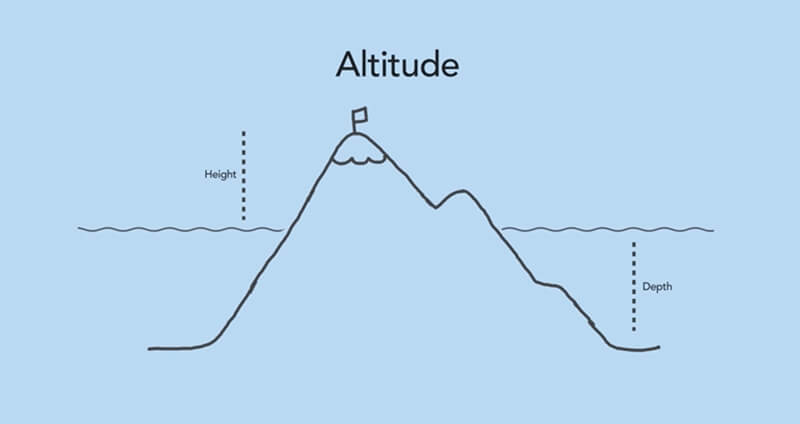

When you’re looking at a two-dimensional waveform display, ‘amplitude’ refers to the height of that waveform. For audio signals this equates to the sound’s loudness. A quiet sound like a whisper will have a small amplitude, while a sound with a lot of energy like a jet engine has a larger amplitude. A good metaphor for amplitude is (hopefully not confusingly) altitude.

We measure the height of mountains as being a certain number of feet above sea level, and the depths and valleys of the oceans to be below sea level. Just like sound, mountains can be formed by two elements colliding.

For sound, it might be a finger plucking a string or striking a key, and in regard to mountains, it would be two tectonic plates running into each other. Depending on how much energy is behind these collisions, the resulting sound will have varying degrees of loudness, which is equivalent to its amplitude. In a way, you can visualise a mountain’s amplitude just by looking up – imagine how loud that collision must

have been!

Timbre

Another word for timbre is ‘tone’. Timbre is the distinguishing characteristic that differentiates one sound from another, despite the fact that they may be playing the same frequency with the same amplitude. When we’re describing a sound’s timbre we often use words like ‘sharp’, ‘round’, ‘reedy’, ‘brassy’, or ‘bright’ to describe them.

Let’s correlate timbre to flavour. Think of apples – they’re a type of fruit that has typical shapes, colours and flavours, but inside the category of apples there’s huge variation. Some apples are very sweet while others are more sour, some are red while others are green… but they’re still all apples, regardless of their various distinct characteristics. Red Delicious is different from McIntosh is different from Gala is different from Honey Crisp. But even then – when describing one specific type, there might be variations from apple to apple.

Exactly the same thing goes for sound sources. One category of timbre we use quite often is strings. Inside of the string family we have violins, violas, cellos and double basses. They have similar timbres and tones in some ways, but each instrument is distinct.

When comparing two different violins, one might have a very bright sound, while the other is more muted and dark. Even when playing one violin we can produce different timbres by bowing a different way. So, put simply, timbre is an attempt to describe – as best as possible – ‘how’ something sounds.

Oscillator

Oscillators are the part of your synthesizer that actually creates the sound. You can think of them as vocal chords. They produce electronic signals which create vibrations that oscillate back and forth, just like your vocal cords. Oscillators use specific voices to sing with and emit waveforms such as sine, square, saw and triangle, each with their own distinctive timbre.

Some synthesisers only have a single oscillator, but others have several which can all sing at the same time, each with their own pitch or tone, creating layered and multi-timbral sounds.

Filter

Filters are everywhere; they’re in our water pitchers, our air conditioners, and used to provide us with tailored content on social media, just to name a few. We encounter filters so often that we tend to forget they’re there, like background noise. Speaking of background noise, we could use an audio filter to get rid of some of that! Let’s correlate an audio filter to a kitchen strainer.

When, for example, we pour a pot of pasta into a strainer, we separate the liquid from the solids. How does this relate to music production? Well, when we’re sending audio into a filter which is only allowing certain frequencies to pass through it, we’re similarly separating those elements we want from those we don’t.

As you might expect, filters in a music production context are a bit more elaborate than kitchen strainers. Not only can we completely separate out frequencies we don’t want, but we can also choose to only reduce their amplitude or increase them, allowing us to sculpt and shape the resulting timbre of our sounds.

Oscillators tend to emit very bright sounds so often you’ll see a low pass filter used, which basically allows the low frequencies to pass through the filter to reach your ears, while eradicating some of the higher, harsher frequencies.

LFO

LFO stands for Low Frequency Oscillator, and is exactly what the name suggests: it’s an oscillator which emits an electronic signal just like any other oscillator, but its signal is at a very, very low frequency – so low in fact that it’s often below of the range of human hearing.

You might ask yourself, why would someone create an oscillator which emits a sound we can’t hear? Well, the answer goes back to the idea of vibrations. The waveforms an oscillator creates travel back and forth, back and forth, back and forth – kind of like a pendulum, and we can use that movement to create many music effects. An LFO swings back and forth, but outputs a signal that isn’t colouring the sound. Instead we use that oscillation to control other parameters.

Many common effects are created with LFOs. If we assign the LFO’s pendulum to a volume knob we have tremolo: a repeating change in amplitude. If we assign it to a frequency parameter, we get vibrato, which is simply a repeating pattern of pitch changes. When we assign it to the cut-off frequency parameter of a filter we get a cyclic variation in timbre, which is how we get those cool wobble bass lines.

Envelope

The word envelope is one that pops up a whole lot in sound design and can be a difficult concept to visualise. Think of the word envelope in its noun form; it’s what you put a letter inside of to send away in the mail. But in the context of music production, it’s more useful to think of the word in its verb form; ‘to envelop’.

The definition of ‘envelop’ is to ‘completely enclose or surround’, which is exactly what an envelope in the music production world does. A paper envelope encloses a letter, and, in a similar way, the envelopes on a synthesiser take an audio signal and wrap themselves around it, shaping and carving the sound from the moment it begins, until it ends.

Similar to how a filter shapes the timbre of a sound, an envelope shapes the evolution of a sound. The most common envelope you’ll see is an amp envelope which shapes amplitude over time but there are many other possibilities. Another common use of an envelope is to wrap itself around a sound’s brightness.

Modulation

Put simply – modulation is change. It’s a word you’ve probably heard when referring to a key change that occurs mid-song. Think of Beyoncé’s Love on Top which has four modulations towards the end of the song, each time raising the harmonic root a half step up creating a harmonic ascending line cliché.

In this case, modulation is referring to change of harmony or key centre. Modulation can generally relate to pretty much any parameter, since they can all be changed (specifically in DAWs such as Ableton Live).

To tie two concepts together now, one of the most useful modulations of an oscillator is pitch, which is assigned to keys on a keyboard, also known as key-tracking. In the same way that Love on Top is moving in half-steps, as we move up the keyboard, note by note, we’re modulating the pitch of an oscillator by a half-step each time.

An LFO uses its oscillator as a control signal to modulate other parameters. To refer back to our previous examples, in order to create tremolo we modulate volume at a certain rate or frequency, to create vibrato we modulate pitch, and to create a wobble bass we modulate frequency cut-off of a filter.

Getting a grasp

We hope our overview of these terms has helped you to grasp their meaning. Ultimately all of this information should enable you to be a more confident music producer, and not to be cowed by dealing with these terms or concepts.

Many courses cover these in more detail, one notable example is Berklee’s Online Synthesis and DSP course which you can find out about here, https://online.berklee.edu/courses/ableton-live-techniques-synthesis-and-dsp. Be sure to check out the accompanying video on the DVD to visualise these terms in more detail. Happy producing.