Smart Songs: how AI is changing the way we listen

We speak to the developers at the technological frontier of music production and playback, to find out how AI is shaping music tech.

In our last look at AI and music, we took a deep dive into how smart algorithms are changing songwriting. But the potential uses of smart algorithms don’t stop there – from the way music is sculpted and refined in the studio to how consumers ultimately play and experience that music, AI-powered tools are having a foundational impact on the recording industry.

Music production service LANDR first made waves with AI in 2014 with its mastering algorithm. Promising to remove mastering from the realm of the ‘dark arts’ and put it within easy reach of average consumers, the company kicked off a contentious conversation about the use of AI in production. Ultimately, while LANDR’s launch grabbed headlines, the company caught an awful lot of flak – both for threatening to replace sound engineers and for not actually being anywhere near reliable enough to do so.

“When I first started at LANDR, as an engineer who’d ‘crossed the line’ over to AI, it felt like I had a big target on my chest,” says Daniel Rowland, LANDR’s head of strategy and an Oscar-winning and Grammy-nominated audio engineer in his own right. Such a vehement reaction seems comical in retrospect because, as Rowland readily admits, “LANDR really wasn’t very good for quite a while”.

Those heated discussions have now largely cooled and LANDR has been quietly getting better and better and better. This audible jump in quality has been driven by an initiative that Rowland spearheaded when he joined the company seven years ago – to build an in-house, all-human mastering team that the AI could learn from.

“We did deals with Warner, with Disney etcetera,” says Rowland. “They send us their music and either I or my team master it. We use the same plug-in stack that the LANDR engine does and every knob we turn, every decision we make, is recorded. The engine is like a baby, watching us and learning from what we do, comparing its output to ours. Effectively, it’s supervised machine learning.”

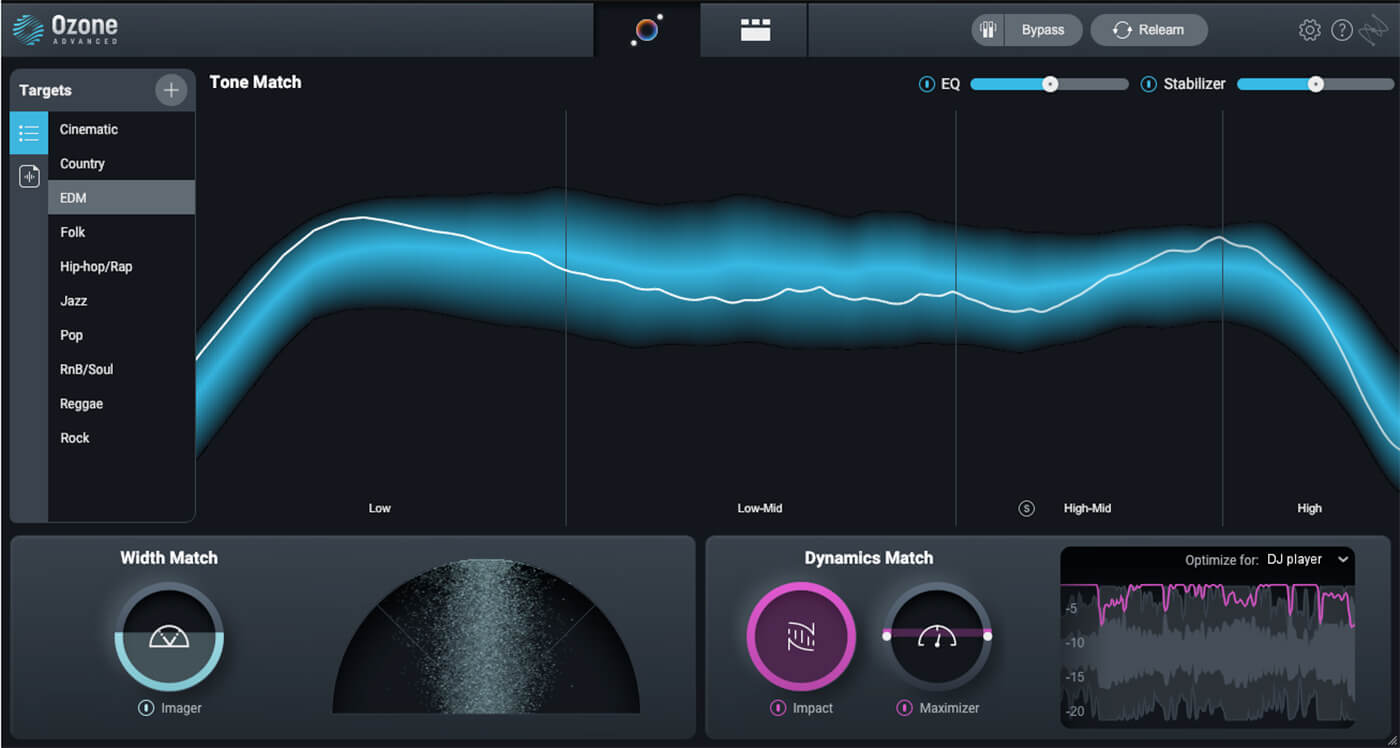

As the efficacy of online services such as LANDR, Cloudbounce and Aria, and offline plug-ins like iZoptope’s Ozone, have increased, AI mastering has graduated from a novelty to a genuinely useful resource. However, Rowland is the first to point out that there’s still no comparison to the nuance you get from an experienced engineer, and that the conversation around the technology has largely moved on from fears of job replacement.

“There’s more content out there than every mastering engineer in the world could ever get through,” he says. “So either people learn how to master music themselves, which is not easy, or they hire somebody for $50 at the low end or a professional for maybe $250.”

This leaves a large group of people who either don’t have the funds to pay for human mastering or aren’t at a stage in their career where they even need it. In Rowland’s view, giving these people a viable and affordable option leads to a larger music industry overall.

“These tools just widen the funnel of people coming in and making music,” says Rowland. “Ultimately a percentage of those new creators are going to stick with it, level up, and become professional musicians who hire professional engineers.”

While mastering may have started the conversation, ‘smart’ plug-ins are becoming available for every stage of music production. Companies such as Sonible, Focusrite, and iZotope are at the forefront of AI-assisted mixing, offering compressors, reverbs, and tone-matching technology that allows you to quickly emulate the sonic profile of a beloved snare hit.

These plug-ins undeniably save time and money for composers who need to quickly iterate and revise cues, as well as independent musicians working up demos, but they’re emerging as an unexpectedly valuable learning tool too.

It’s common for beginners and professionals to use reference tracks during the production process, and this is an area where AI tools offer distinct benefits. London-based mixing and mastering engineer James Lewis puts it like this: “When you’re getting started, it’s like looking out at an ocean; it’s massive and trying to navigate your way through it is hard. Reference tracks are like beacons that help you find your location relative to the wider space. AI tools have a lot of value in providing those beacons in the water.”

Being able to quickly get a sense of how your vocal mix fares against the ‘mean average’ pop sound is a great workflow enhancement, but of course this is all just a taste of what’s coming. “In the next five years,” says Rowland. “There will be a button that gets you a baseline mix in major DAWs – I know that for a fact. Will it be a releasable, professional-quality mix? Absolutely not. It’s just a baseline to get people started.”

One-click mixing has the potential to democratise the music-making process, giving people of all skill levels access to results that previously required study or hours of trial and error to achieve. At the same time, such easy-to-use tools could lead to a new kind of ‘preset’ dependency. An artist’s creative and analytical muscles only grow through regular exercise, and while reference points and workflow shortcuts are one thing, AI that gets you closer and closer to the finish line may have detrimental impacts that we don��’t yet fully understand.

When it comes to music playback, the most obvious impact of AI has been in the individualised playlists that are now a standard feature of major streaming platforms. Beyond these personalised recommendation algorithms, there are companies using AI to reshape songs themselves to suit the needs of each listener.

Adaptive and generative music is nothing new, but these mainstays of the gaming industry and contemporary music world are being put to new application by fitness and wellness start-ups. Using AI and real-time data streams, companies like Endel and Weav Music are helping establish playback paradigms that challenge our very conception of what a finished song is.

In the case of Weav Run, tracks by artists like Lizzo and Cardi B are deconstructed and remixed into an adaptive format that can seamlessly shift arrangement and BPM to sync with a runner’s footfall. “We started exploring things like, ‘how do we tie the high-intensity part of your workout to a high-intensity part of the song? How do we align the drop of the chorus to the beginning of the workout?’,” says Mani Singh, CTO of Weav Music. “We found that pairing adaptive music with adaptive workouts gives you this super-personalised training that has a very high engagement.”

The Berlin-based team at Endel takes personalisation even further – using the time of day, ambient lighting, local weather conditions and health data gleaned from wearables to generate and adapt music in real time.

“When we started researching,” says co-founder and lead sound designer Dmitry Evgrafov, “speaking with neuroscientists and scientists who specialised in sound, they all told us it has to be personalised. It has to be a personal listening experience, because that’s the only way you can truly adapt music to the needs of the body.”

Aiming to tackle the ubiquitous 21st-century ills of burnout, insomnia, anxiety and ever-present existential dread, Endel sees hyper-individualised listening as key to a calmer, more mindful daily existence – and the science seems to be on their side. The positive benefits of music and sound therapy are well established, and the potential to augment such therapies with real-time health data has attracted the attention of a number of Silicon Valley developers. Notably, Spotify filed a patent for a similar use of biometric data to modify existing music in 2021.

As the quality of these generative music systems increases, the debate between what music is considered ‘functional’ and what’s ‘artistic’ becomes more and more interesting. Endel maintains that the company is rooted in the ‘functional’ camp, with music serving the primary goal of “positively affecting your cognitive state through the power of sound”. But, simultaneously, high-profile artist collaborations are a central feature of its offering.

Working with the likes of Grimes, Toro Y Moi and Richie Hawtin, the line between ‘functional’ and ‘artistic’ is being blurred. Its latest collaboration, Wind Down, saw Endel work with singer-songwriter James Blake on an evening-focused experience that helps bring the day to a close. A warm, sweeping current of tones, drones and signature vocals, Blake’s contribution to the Endel platform performs its designated function but, beyond relaxation, it feels genuinely engaging and satisfying to listen to as music.

Endel’s generative engine has the potential for moments of musical beauty worthy of Brian Eno himself, and it’s possible that we might be seeing the start of a new musical wellness industry, with pop music’s best and brightest folding meditation and mindfulness into their personal brand.

Given the many controversies surrounding the use and misuse of big-data, not everyone will be thrilled at the prospect of biometric music. The question of just how personalised this particular rabbit hole can, or should, get is most likely to be answered by the industry giants who already dominate data collection and health wearables: Google, Meta and Apple.

Put on your dystopia-tinted glasses and it’s not so far-fetched to imagine music applications that integrate data from search and purchase history, direct messages and email, or even eye-tracking and EEG readings from AR wearables. Your future Christmas shopping experience in the Metaverse may not be soundtracked by Mariah Carey but by some calming binaural beats, generated just the way you like them.

That digression aside, AI has enormous potential to enhance and to shape the way we make music. It’s hard to deny the value of tools that abstract away complexity and let people simply have fun with the process, or of musical mediums that fit around your life like sonic memory foam.

The future sounds pretty good and, at least for now, there’s still a place for beating hearts. “AI is not meaningful without real ears or eyes assessing, choosing and steering it,” Evgrafov says. “It’s about how you curate the output; those small details are what makes it artistic – and AI cannot answer those questions for you.”